It’s tradition when talking about space electronics to open with Telstar. Telstar 1 was the first commercial satellite to fail due to radiation effects. The US exploded a nuke in earth’s upper atmosphere the day before Telstar was launched. That nuclear blast knocked out streetlights, set off burglar alarms, and damaged telecoms infrastructure in Hawaii, 900 miles away from the detonation. The charged particles from that explosion hung out in orbit and created man-made radiation belts that lasted for more than 5 years. At least six satellites failed due to the additional radiation from the blast.

Most spacecraft don’t have to deal with nuclear fallout (floatup?), but the radiation environment outside of Earth’s atmosphere is still a fearsome thing. A high energy particle can destroy sensitive electronics, and even low energy particles can eventually sandblast a circuit into submission. So how do we trust the satellites we have in orbit now? How can we send robots to Mars and expect them to work when they get there?

Before we can say how people build reliable computers in space, we need to know exactly what might happen to them there. There’s an enormous number of engineering constraints for space hardware. They range from thermal management to power management to vibration. Most of these concerns have terrestrial analogues. Car makers in particular have gotten good at a number of these issues. I’m going to focus here only on the impacts of space-radiation, as they are the most different from what you might focus on when you’re designing hardware for Earth.

Space Weather? More like space bullets

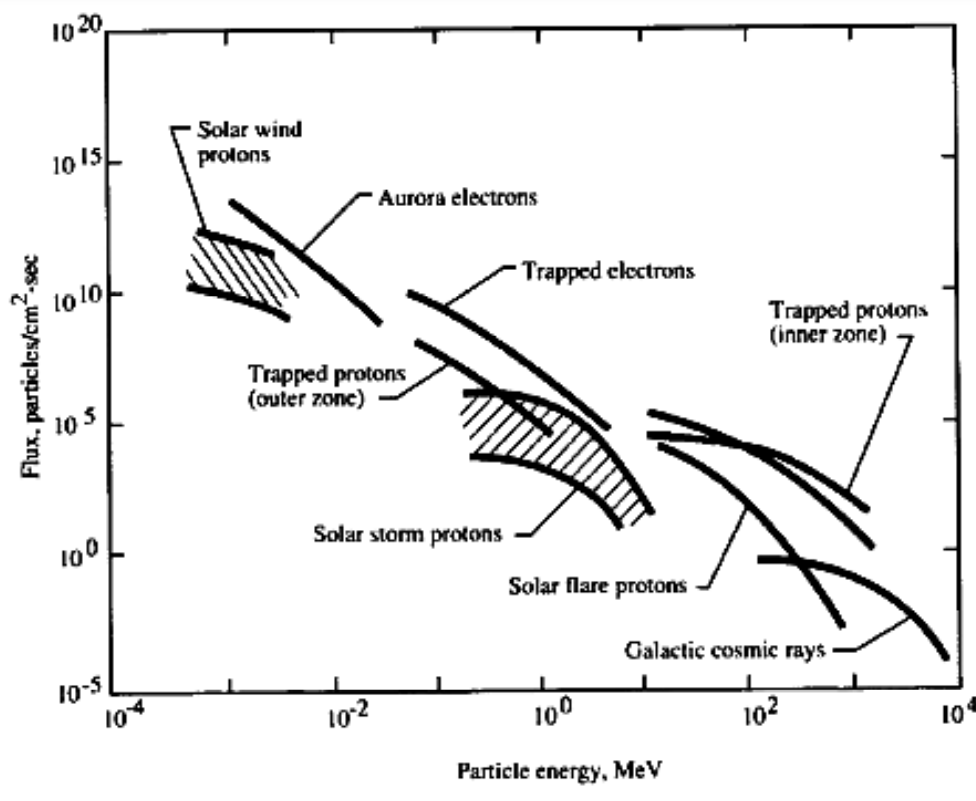

Empty space is full of charged particles. It’s like putting electronics in a very very diffuse dust storm, and then letting that dust bombard the electronics. But instead of dust, the particles in space are generally individual electrons or atomic nuclei. Many of these come from the sun. In addition to putting out the photons that drive all life on Earth, our star also spits out electrons, neutrons, protons, even helium nuclei and large elements.

The number and type of particles that our sun spits out is variable and somewhat unpredictable. There’s an 11 year solar cycle, where the sun puts out more particles for a while and then fewer. That cycle is only 11 years long on average though; it could be as short at 9 or as long as 13. That means if you’re planning a mission that’s 5 to 10 years away, you can only make a good guess about what the solar cycle is doing at launch. Aside from the cycle, you also have to worry about coronal mass ejections and solar flares, where the sun just burps out a ridiculously huge amount of radiation all at once. These ejections have shut down satellites, broken national electrical grids, and just generally made life harder for electrical engineers on earth and in space.

Aside from our star, you also have to worry about every other star in the universe too. The particles that don’t come from our sun are generally lumped into one category called Galactic Cosmic Radiation (why is it galactic and cosmic at the same time?). If this Galactic Cosmic Radiation makes it near Earth, it’s probably pretty energetic. Lots of energy means lots of chances to hurt our stuff, so we definitely need to watch out for those.

Regardless of the source of the particle, there’s really only three types of particle that we have to worry about: electrons, protons, and heavy nuclei.

Electrons are very very small. They don’t have much mass, so they often don’t carry much energy. They only have a charge magnitude of 1 unit. There are a ton of these in space, so these are the sand that you generally get sand blasted with. Each individual particle doesn’t do much, but they add up.

Protons are pretty big; they’re around 2000 times larger than an electron. Even though they have an electrical charge that’s the same magnitude as an electron, they’ll often carry more energy due to their larger mass. You can think of these like gravel in a sandstorm. There’s fewer of them, but they hit you harder. And some of them have enough energy to really hurt you all on their own.

Finally, there’s the heavy nuclei. A proton is just a hydrogen atom without the electron bound to it. Heavy nuclei are the same thing, but for larger atoms like helium. With Galactic Cosmic Rays, you may even be hit by uranium nuclei. Each of these particles has more mass and more electric charge than a proton, so they’ll generally carry more energy. It’s like being hit by a rock. There are far fewer of these heavy nuclei though. Galactic Cosmic Radiation, for example, is 85% protons, 14% helium nuclei, and only 1% heavier nuclei.

There’s a lot to be said about space weather here. Where you are in space, whether you’re in low earth orbit or on your way to the moon, has a huge impact on how much radiation you see. So does the solar cycle, and even the specific orbital plane you may be in when orbiting the Earth. These details are highly important if you’re going to be designing hardware for a real mission, as they’ll drive the radiation tolerance specification that your hardware has to meet. For now, we don’t need to care about that. We don’t have a real mission to design, we’re just trying to understand the general risks to hardware.

So let’s say you’ve got some particle flying around space. Probably it’s a tiny electron, but there’s a small possibility it could be an enormous uranium nuclei or something. What happens when that particle hits your electronics?

Circuit Collisions

An atom is like a chocolate covered coffee bean. You’ve got the small and hard nucleus with a ton of contained energy, and that’s surrounded by a soft and squishy electron shell. Maybe back in high school you saw a drawing of an atom that was like an electron-planet orbiting a nucleus-sun? That’s not accurate. The electrons really do form a (delicious, chocolatey) shell around the nucleus due to quantum something-something (look, this isn’t supposed to be a physics post).

This is important because when a charged particle from the depths of space hits our coffee-bean atom, it’s going to bounce off of that electron shell. When it does, it’ll deposit some of its energy into the electron. Depending on how much energy it leaves behind, it could knock an electron free of the atom it hit. If you look at a chip that’s been hit by a cosmic ray, you’ll often detect tracks of electrons that have been knocked free from their atoms as the incoming particle bounces around like a pachinko ball in the atomic lattice of the chip.

What do these extra free charges in your circuit actually do? That depends completely on where they are. Lots of them may do nothing, especially if the particle hits copper or PCB substrate. If the particle passes through a transistor or a capacitor, the extra charge can do more damage, especially as they build up.

Chips that are rated for use in space have a specification called Total Ionizing Dose, measured in rads. This is the minimum amount of radiation they can experience before failure. Each incoming particle adds a bit more to the total dose that the chip has received, increasing the number of additional electrons (and technically holes as well). These extra charges can build up in transistors and diodes, changing switching characteristics or power draw. Eventually, the buildup can force transistors into an always on state, and then your Central Processing Unit can’t Process anymore.

There’s another failure mode, too. You might have a particle hit your chip with enough energy that that single event causes a problem. These events, creatively called Single Event Effects, can range in impact. You might be looking at something as small as a bit flip or as large as a short from power to ground. Even a bit flip isn’t necessarily small, if it changes the operation of a critical algorithm at the wrong moment.

The effects of a SEE depend completely on how your chip was made, and I haven’t had any luck finding information about how to predict that kind of thing from first principles. In practice, I think chip makers just make their chip and then shoot radiation at it to see what happens. If they don’t see any SEEs, they say it’s good. When they’re testing their chips for susceptibility to SEEs, manufacturers will use a measurement called Linear Energy Transfer (LET). That tells you how much energy gets transmitted from the incoming particle to the atoms it hits. Higher LET values mean more damage to the chip. While TID is more related to the slow buildup of defects in the chip, SEEs are dictated by whether particles with a specific energy can cause your chip to fail on their own. So if you test all the parts of a chip up to the energy level you might see in flight, you can have confidence that it won’t fail. In one 1996 experiment, a strong falloff in space particle counts was seen for energy levels above about 10 MeV-cm^2/mg. If you don’t have any SEEs for impacts around that level or below, you might consider yourself kind of safe.

You might also ask: what happens if an incoming particle hits the coffee bean in the center of all that chocolate. I mean: what happens if the particle hits the atomic nucleus of an atom in a semiconductor lattice. This can and does happen, and the impacts are pretty similar to what was described above for impacts to the electron shell. In general this is less likely. Electrons usually don’t have enough energy to make it all the way to an atomic nucleus. Heavier nuclei also have more electric charge, so they get pushed harder away from the nucleus and generally can’t make it in either. It’s usually the protons that hit a nucleus, pushing the nucleus out of position or causing it to decay into other atomic elements. Functionally you’ve got the same TID and SEE failure modes though.

A similar failure mode to Total Ionizing Dose is called Displacement Damage. This is caused not by freed electrons, but by nuclei that have been pushed out of their lattice. In practice this can have similar effects to TID.

Reliability at the IC level

The best way to avoid radiation problems in an electronic circuit is to build your circuit out of parts that don’t have problems with radiation. This is what government satellites have always done, and it’s what commercial satellites have mostly done until very recently. You can pick CPUs, RAM, etc. that have been designed to withstand radiation. These parts are likely to be larger, more expensive, and less good than their non- radiation hardened equivalents.

It takes a lot of money and time to design a chip to be rad hard, and there aren’t really a lot of customers out there that buy them. That means that chip manufacturers are likely to keep old technologies around for a while to let them pay off their capital investments. Satellite designers generally don’t mind this, because if they take a risk on a newer part that doesn’t have “space heritage”, their satellite might die before it manages to pay off it’s own capital costs. Many large satellite designers won’t switch to a new chip unless they literally have to in order to hit their functional requirements. Conservative design decisions dominate the space industry.

So when you are designing your spacecraft, you’ll probably start by making a list of all the parts you want. For each of those parts, you can look for rad-hard versions of them. You’ll look for chips that have a TID that’s above what your mission might experience (including some safety margin). You’ll also look for chips that have been tested and shown not to experience severe SEEs at LET levels below what you might experience in your mission.

If you’re really serious, you’ll only buy chips from manufacturers that spot test every lot of parts that they make. Some parts are only tested during the design phase, and then you assume that their production runs are good enough. It turns out that there’s enough variance in performance from one production lot to the next that, if you have a critical component in a high radiation mission, using a part made one week after another can make a crucial difference. If that mission needs that level of reliability, you pay for the extra testing.

But lets say that your mission actually isn’t that critical. You want to put a phone in low earth orbit for a few days to take some pictures. If it fails, nobody dies and nobody loses their job. In this case, a literal off-the-shelf phone might be good enough. Most commercial components can handle somewhere between 1krad and 30krad of TID. That means that if your mission is short enough then you might be able to ignore the TID effects. SEE is still a crapshoot though, and there’s always the possibility that some commercial part is on the low end of the TID range and you’re in a solar maximum.

If you want to save money, but you also want more reliability than smartphone components will give you, you can use a rad-hard management CPU to control a higher performance commercial CPU. The rad hard CPU is expensive, so you buy a super low performance one and just use it to watch the high performance CPU for errors. If the rad-hard CPU sees an error, it can reset things to a good state (probably, depending on the error). That leads us to our next method of dealing with space radiation.

Active Error Checking

The next thing you’ll want to do to make your spacecraft robust is to actively look for runtime errors and try to runtime correct them. This often gets rounded down to making critical systems redundant, but there’s actually a lot more to it. The aerospace industry has developed an enormous set of methods for doing this, ranging from error correcting codes on any data stored in RAM to running every algorithm on multiple CPUs and comparing results. It’s also very common to give critical systems a watchdog. If those systems don’t pat the dog often enough, the watchdog will reset the system. Another common technique is to put current monitors on all the power rails. If there’s a sudden current spike, you might have a SEE that’s causing a short. If the current monitor can trigger a reset fast enough, you might not lose the hardware.

While this section is pretty short here, that is mainly because these techniques are so varied and so application dependent. When it comes to designing a system for space, more design time is probably spent on active error checking than other mitigation methods.

Shielding

Finally, you’re going to want to shield your electronics. If you can just keep the particles from hitting your chips, then you don’t have to worry. Almost all electronics in space are shielded in some way or another. Often designers will include an aluminum box around all their electronics.

A note on material. When I first started learning about this, I was comparing space radiation shielding to the lead aprons in a dentist’s office. I started out thinking aluminum was used in space because it was lighter than lead (cheaper to launch), and that as launch costs dropped we’d change materials. This turns out not to be true. If you bombard lead with protons or heavy nuclei, it can decay into other heavy particles that can damage your circuit even more than the original cosmic ray. Lead is used in the dentist’s office because you’re just trying to block X-rays, which aren’t going to cause the lead atoms to decay. Aluminum is less likely to decay if it’s hit by a GCR, so is much more effective as a shield.

In fact, it turns out that materials with lower atomic numbers generally do best for radiation shielding (in terms of shielding per unit mass). This is such a large factor that NASA is looking into ways to use hydrogen plasmas to shield against radiation on manned missions. With electronics, we generally want to use a conductive shield because that leads to better EMI performance. There’s also the fact that electronics are usually much more space constrained that humans, so we probably want denser material to save space. This does leave me wondering why aluminum is used so often instead of Magnesium. My guess is that, since you pretty much only get magnesium in MgO, it’s not conductive enough to make a good EMI can.

Now we know that we want a shield that’s a light element (low atomic number). We also know that we need a conductive shield to meet our other product requirements. This explains why we pick aluminum. But then we ask: how thick should our shield be?

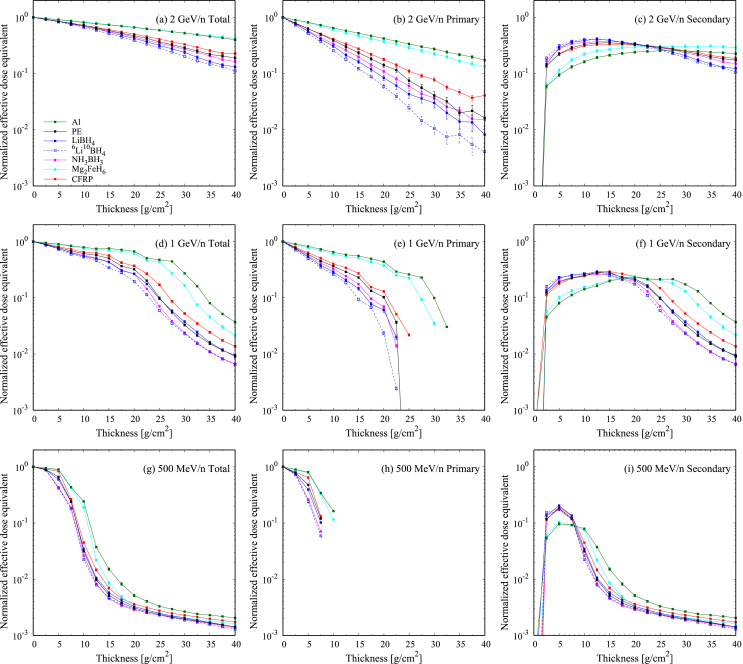

One shielding experiment was performed on a variety of materials to look at how much radiation made it through.

The experiments there show thickness measured in g/cm^2. This weird unit makes sense when you consider that you have to pay launch costs by the kilogram. Two shielding materials may be very different thicknesses, but you really want to compare by mass (assuming you have the volume for them, which electronics probably don’t). Let’s just focus on the data for aluminum, which has a density of 2.7 g/cm^3. That means we can calculate real life thicknesses dividing the thicknesses from the plot’s x-axis by 2.7.

These plots show that for high energy particles of 1GeV or more, we don’t get a ton of shielding unless our aluminum box is more than 11cm. That’s probably too thick for anything outside of the Apollo Guidance Computer. What about the more common energies of 500MeV? For those, an enclosure thickness of 4cm cuts out all the primary particles and many of the secondary particles created by collisions. That’s still pretty thick, but feasible for some missions.

This also helps show why shielding primarily helps with Total Ionizing Dose. A thick enough shield can cut down most of your low energy particles. The higher the energy, the more likely those particles are to make it through your shield with only slight energy decrease. That means shielding is likely not a great way of solving SEEs, thought you could make them less common with a thick enough shield.

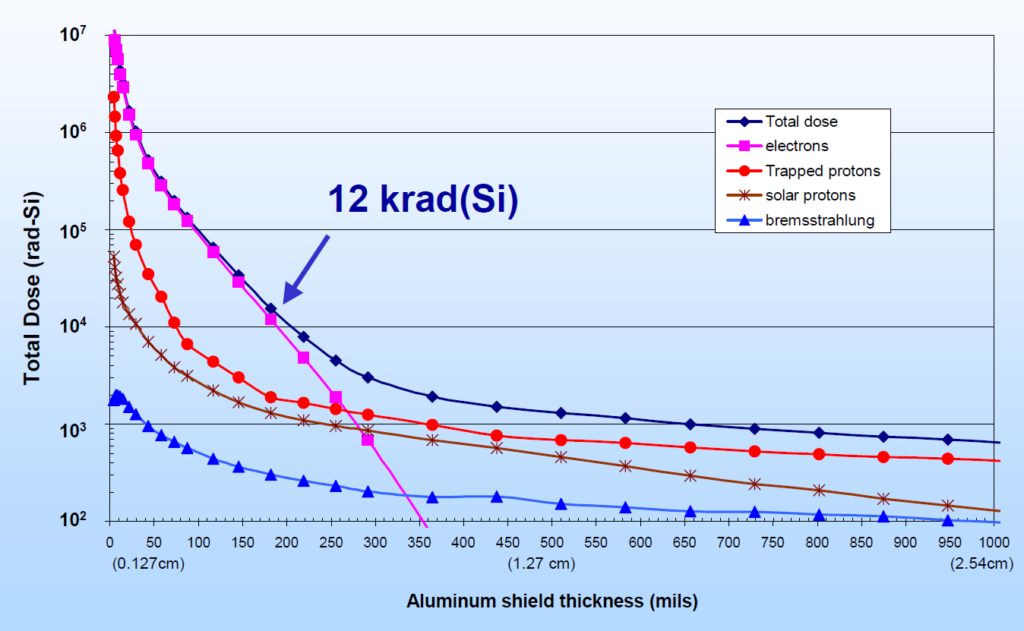

Experiments with single energies may not give you the information you want regarding TID. One thing that’s been done is putting radiation sensors in space surrounded by varying thicknesses of aluminum. You can then look at the TID after some given duration. Kenneth LaBel shows some of this data in a presentation from 2004.

These numbers depend a great deal on the specific orbit that the experiment was carried out in, but they do give you a sense for what to expect. For an orbit like this (ranging from 200km to 35790km), there are obvious decreasing marginal gains for shielding thicknesses above about a third of an inch.

Zap

Space. The final frontier. Full of radiation. And that radiation is just particles zipping about. If they hit your circuit, you might get very sad. In general, you can predict how much radiation a spacecraft might see on any given mission, and you can design in mitigations for that radiation. If you’re willing to pay the price in dollars and mass and design time, you can make systems that should survive for quite some time in space (assuming they don’t have a nuke shot at them).

Resources

- A brief history of electronic reliability in space — including today’s risks and how to mitigate them

https://www.electronicproducts.com/Aerospace/Spacecraft/A_brief_history_of_electronic_reliability_in_space_including_today_s_risks_and_how_to_mitigate_them.aspx - Radiation Effects on Electronics 101: Simple Concepts and New Challenges

https://nepp.nasa.gov/docuploads/392333B0-7A48-4A04-A3A72B0B1DD73343/Rad_Effects_101_WebEx.pdf - NASA Space Radiation eBook

https://www.nasa.gov/sites/default/files/atoms/files/nasa_space_radiation_ebook_0.pdf - The Impact of Space Radiation Environment on Satellites Operation in Near-Earth Space

https://www.intechopen.com/online-first/the-impact-of-space-radiation-environment-on-satellites-operation-in-near-earth-space - Howard Jr, J. W., and D. M. Hardage. “Spacecraft Environments Interactions: Space Radiation and Its Effects on Electronic System.” (1999).

- Nwankwo, Victor UJ, Nnamdi N. Jibiri, and Michael T. Kio. “The impact of space radiation environment on satellites operation in near-Earth space.” Satellites Missions and Technologies for Geosciences. IntechOpen, 2020.

- Introduction to Radiation Shielding

https://www.nasa.gov/sites/default/files/files/SMIII_Problem25.pdf - Naito, Masayuki, et al. “Investigation of shielding material properties for effective space radiation protection.”Life Sciences in Space Research(2020).

- Pham, T. T., and MOHAMED S. El-Genk. Simulations of space radiation interactions with materials and dose estimates for a lunar shelter and aboard the international space station. Technical Report ISNPS-UNM-1-2013, Institute for Space and Nuclear Power Studies (ISNPS), University of New Mexico, 2013.